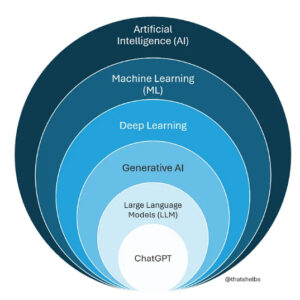

AI is often discussed as a single concept, but different types of AI do different kinds of work. As shown in the diagram, GenAI is a specific subset of AI, alongside machine learning systems that analyse data to classify, score or predict outcomes.

Machine learning systems typically produce constrained outputs, such as probabilities or categories. A familiar example is a streaming platform recommending a new series based on what you have watched and liked before. GenAI, by contrast, is designed to generate new content, such as text or images, in response to a prompt. Tools like ChatGPT are built on large language models. These models produce fluent and plausible outputs, but they do not evaluate correctness or understanding.

This distinction matters for teachers because it affects what AI should be used for, when its use supports learning goals and when it risks bypassing them. Machine learning can help identify patterns or risks in existing data. GenAI is better used to support explanations, practice, feedback or idea generation – when paired with intentional educational design.

How GenAI and LLMs work

GenAI that generates text (e.g. ChatGPT, Claude, etc.) is based on Large Language Models (LLMs) which learn language patterns from ‘training data’ (a vast collection of existing texts in books, articles, websites). Through probability, LLMs predict which word is most likely to come next in a sentence, and then sample from these possibilities, rather than always choosing the single most likely word.

This process enables LLMs to generate complex and well-written texts (called ‘output’) in different styles and languages in seconds, based on a user prompt (set of instructions or a question).

GenAI and limitations

Despite its many benefits, GenAI also has limitations. Users should be mindful of functional issues and ethical concerns, and use critical thinking to analyse and evaluate the output generated by GenAI.

Have a look at the e‑learning AI literacy for teachers or watch the video’s below to learn more about:

- the generation of false information (‘hallucinations’)

- bias in GenAI systems

- privacy issues

- sustainability issues